MICROSOFT DATA WAREHOUSE SOLUTIONS

See how nFolks can help you get the most out of the Microsoft Data Warehouse platform technologies like SQL Server, Azure SQL Data Warehouse, Azure Data Lake, and more.

WHY YOU SHOULD CONSIDER DATA WAREHOUSE SOLUTIONS FROM MICROSOFT

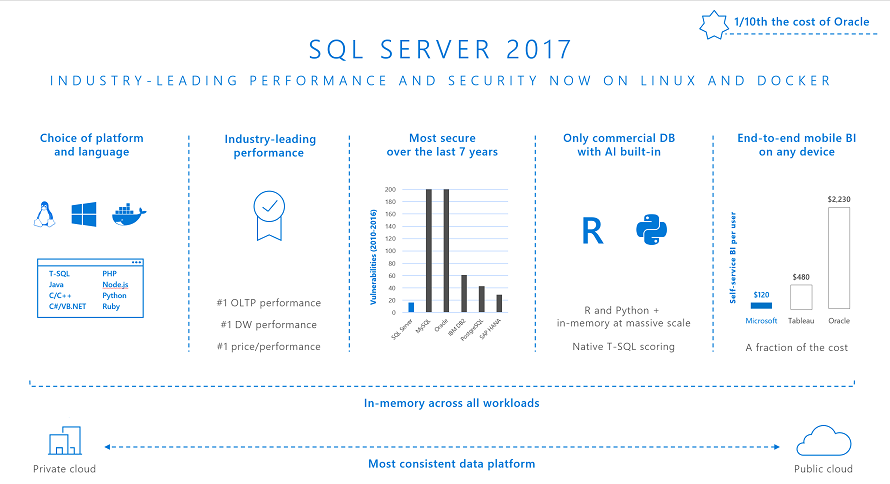

In addition to using SQL Server 2017, Microsoft offers the Analytics Platform System with appliance offerings from HP, Dell, and QCT for massively paralleled processing power that can scale out to 6 petabytes of data.

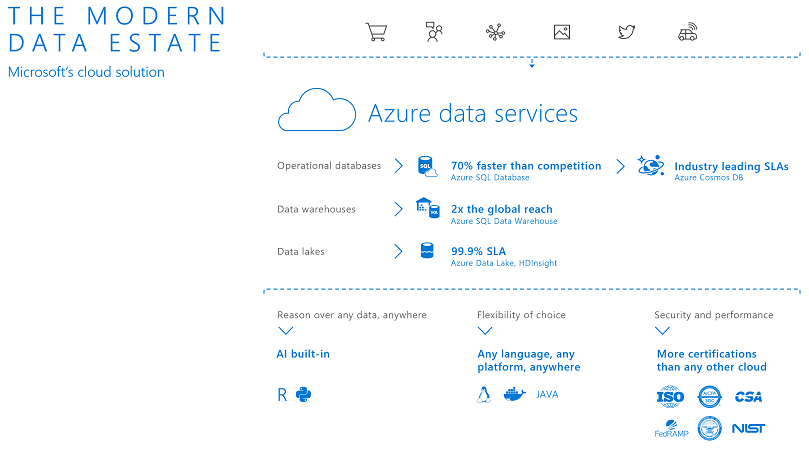

Likewise, Microsoft Azure data services featuring Azure SQL Data Warehouse and Azure Data Lake Analytics provides great on-demand scaling with competitive pricing. More importantly, you have excellent integration with other services like the Microsoft AI platform and open source solutions.

Everything you hear from vendors like Microsoft is that their platform provides the freedom of choice. The problem is when you have too many choices!

CHOICES WITHIN YOUR DATA CENTER

Within your data center, you have several choices focused around your IT infrastructure. For example.

- Servers:

- Size: Do you go with bare-metal servers or virtualization with VMware or Hyper-V using a large host server?

- Tip: Most of the time, we recommend going with a virtual environment to avoid over-provisioning of CPU cores and memory.

- Memory: How much memory is enough? SQL Server will attempt to use all available memory to cache data or use it for In-Memory OLTP and In-Memory Columnstore.

- Tip: Get as much memory as the server supports. All the database vendors use per-core licensing. However, the more memory you have, you reduce the potential for slow query performance due to memory pressure. If you are virtualizing systems, you can always allocate the memory for other VMs and services.

- Tip: If you want the best query performance for data warehouse queries with SQL Server 2017, consider running on Linux and using a combination of In-Memory tables with Clustered Columnstore indexes. To determine how much system memory is needed to keep the data fully in memory, the general rule is to allocate 3 times the size of the table when using just a Clustered Columnstore index for RAM. For example, if your Fact table uses 1 TB of Clustered Columnstore data, plan on 3 TB of RAM.

- Operating System: Now that SQL Server 2017 runs on Linux, you have yet another choice to make. Here is a data point to consider – the SQL Server 2017 Enterprise Edition running on SUSE Linux Enterprise Server 12 SP3 for X86_64 holds the world record for the new TPC-H Advanced Sort Results benchmark as of March 21, 2018.

- Tip: If you run both Windows and Linux operating systems in your data center, consider SQL Server 2017 on Linux. DBA tools like SQL Server Management Studio fully support management of SQL Server 2017 on Linux. It’s well worth a little Linux training for your SQL Server DBAs to get better performance with lower operating costs. Our solution architects can craft a one-day crash course on Linux for the SQL Server DBA for your team.

- Servers (cont’d):

- Data Warehouse Fast Track solutions: One of the easiest ways to optimize SQL Server 2017 for data warehouse solutions is to choose one of the hardware vendors and their Fast Track solution. Hardware vendors like HPE, Dell EMC, Cisco, Quanta, Lenovo, and more have created hardware solutions based on the Microsoft fast-track reference architecture, optimized for data warehouse solutions. Appliances range in size to support data warehouses from 1 TB to 120 TB using a single server with a symmetric multiprocessing (SMP) architecture.

- Analytics Platform System solutions: If your data warehouse has to grow beyond 120 TB and it has to remain on-premises based on your organization’s data governance policies, Microsoft Analytics Platform is a great way to go for up to 6 petabytes of storage. Solutions from HPE. Dell EMC, and QCT.

- Storage:

- On-board versus different types of storage arrays: We recommend a hybrid approach when it comes to storage systems based on our experience and guidance from the Fast Track reference architecture.

- Tip: Consider using Flash storage on the physical server for allocation to the virtual machine for SQL Server’s TempDB. For complex data warehouse queries, TempDB can often be the file IO bottleneck. Local flash storage can dramatically reduce disk latency attributed to TempDB usage.

- Tip: Consider using all-flash storage arrays from your favorite storage vendor. You typically can get five times the density within your physical rack, five times the power efficiency, and ten times greater reliability over traditional spinning disk drives. Not to mention 2M raw IOPS depending on your vendor’s solution. nFolks agreement has closed from this month has vendor relationships with Western Digital, Cisco, Pure Storage, and NetApp. Our solution architects along with architects from our storage partners can help your team optimize performance for your data warehouse solutions.

- On-board versus different types of storage arrays: We recommend a hybrid approach when it comes to storage systems based on our experience and guidance from the Fast Track reference architecture.

CHOICES IN THE CLOUD

Here is where the decision-making process becomes interesting.

Virtual machines: Microsoft, AWS, and Google continually improve server technology with more cores and more RAM. For example, you can provision a server with up to 128 cores and 4 TB of RAM that can support 30 TB of data on SSD storage. If you then consider using SQL Server 2017 PolyBase with scale-out groups to connect to one of the supported Hadoop distributions, Azure Blob Store, or Azure Data Lake Store, you can effectively extend your data warehouse limited only by your cloud spending limit.

- Tip: Based on our benchmarking and customer experience, running SQL Server on virtual machine solutions for Azure, AWS, and Google Cloud will give you greater performance per dollar for up to 15 TB data warehouses compared to Azure SQL Data Warehouse. Of course, you need to manage the server. However, Microsoft Azure provides manageability features like automated patching within a scheduled maintenance window, automated backups to blob storage, Geo Redundant Storage, easily configurable Always On Availability Groups with readable secondaries, Azure Site Recovery, and pre-configured VM templates optimized for data warehouse solutions.

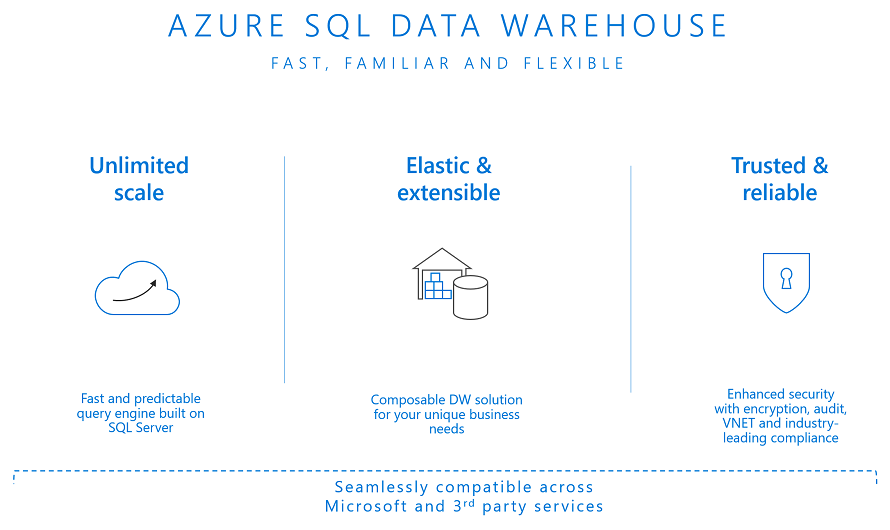

Azure SQL Data Warehouse: This solution offers a cloud-based massively parallel processing (MPP) data warehouse architecture similar to the Microsoft Analytics Platform System. Due to the MPP architecture, we recommend, based on guidance from Microsoft and our own experience with customers, considering Azure SQL Data Warehouse for data warehouse sizes 10TB or more. You get essentially unlimited scale with PolyBase capabilities to integrate your cloud-borne dark data with data from your enterprise data warehouse.

- Tip: Don’t consider a proof of concept pilot unless you have at least 10 TB of data from your data warehouse. We’ve had first-hand customer experience migrating small Oracle Data Warehouse solutions to Azure SQL Data Warehouse under 5 TB, where performance was substantially slower than the original Oracle database system. Once we increased the data over 10 TB, Azure SQL Data Warehouse performance was significantly faster with a great price per terabyte savings over the Oracle solution.

Oracle and Microsoft prohibit us from publishing benchmark values, but you can contact us directly to learn more about migrating your Oracle Data Warehouse databases to Azure SQL Data Warehouse.

Azure Data Lake Store: This serverless based solution, along with the U-SQL for querying non-relational data across a variety of data sources, provides a great way to understand your dark data.

- Tip: Consider using just Azure Data Lake Store to analyze your dark data. If you discover data that needs to get incorporated into your Enterprise Data Warehouse, go ahead and update your data model and ETL processes to load the data into either Azure SQL Data Warehouse or SQL Server running on an Azure VM. You can then incorporate your standard practices of using data cleansing, master data management, and optimizations with Analysis Services for a consistent view of the data for business decision makers.

Here is a great overview provided by Microsoft to better understand the choices available to get the most out Microsoft Data Warehouse solutions.

GETTING STARTED

We have a variety of offers and incentives from Microsoft to help you get started with our team of experts to future-proof your data warehouse and analytics solutions.

As a Microsoft Gold Partner, you can offset your investment using Microsoft’s SQL Server Deployment Services vouchers that you received as part of your Microsoft Software Assurance contract.

- Future-State Architectural Design

Everyone is talking about the need for a digital transformation, but how do you get there? See how our future-state architecture design can use cutting edge technology to meet your organization’s needs.

- Azure Data Platform Readiness Training

Are you looking to stay ahead of the competition by using Azure? Our full day Azure data platform readiness training can jump start your team to better understand what is possible.

- SQL Server 2017 Readiness Training

Learn how to take advantage of SQL Server 2017 and reduce costs by running on Linux, reducing performance troubleshooting time, and simplifying database security.

- Microsoft Azure Data Warehouse Migration

Informatica and Microsoft have teamed up to help customers accelerate and simplify the expansion of their data estate into Azure SQL Data Warehouse. As an Early Advisor, DB Best is ready to help you with this limited time offer.

- SSDPS — Deploy Data Warehouse Solution

This engagement allows you to integrate existing relational data sources with new big data sets using your team’s existing skills.

- SSDPS — Deploy Modern Business Intelligence

This engagement explores how to take your BI solutions on-premises or in the cloud to the next level with technologies including Analysis Services, Power BI, and Reporting Services.